Solutions · AI Data Center Infrastructure

Reference architectures for accelerated compute.

Site selection, electrical topology, mechanical systems and network fabric — engineered as one coherent infrastructure for frontier AI workloads.

- Density target

- 120kW+/rack

- Cooling

- Liquid-to-chip

- Fabric

- Optical, 800G

- PUE

- < 1.15

S/01 · Thesis

Designed for training. Tuned for inference. Hardened for both.

An AI data center is not a hyperscale data center with denser racks. It is a different physical instrument — one where the entire facility behaves as a single, tightly-coupled distributed computer. Every layer of the stack reflects that.

Our reference architectures start from the workload: model size, training regime, inference latency budget, jurisdictional posture. Every downstream choice — site, power, mechanical, network — is governed by those constraints.

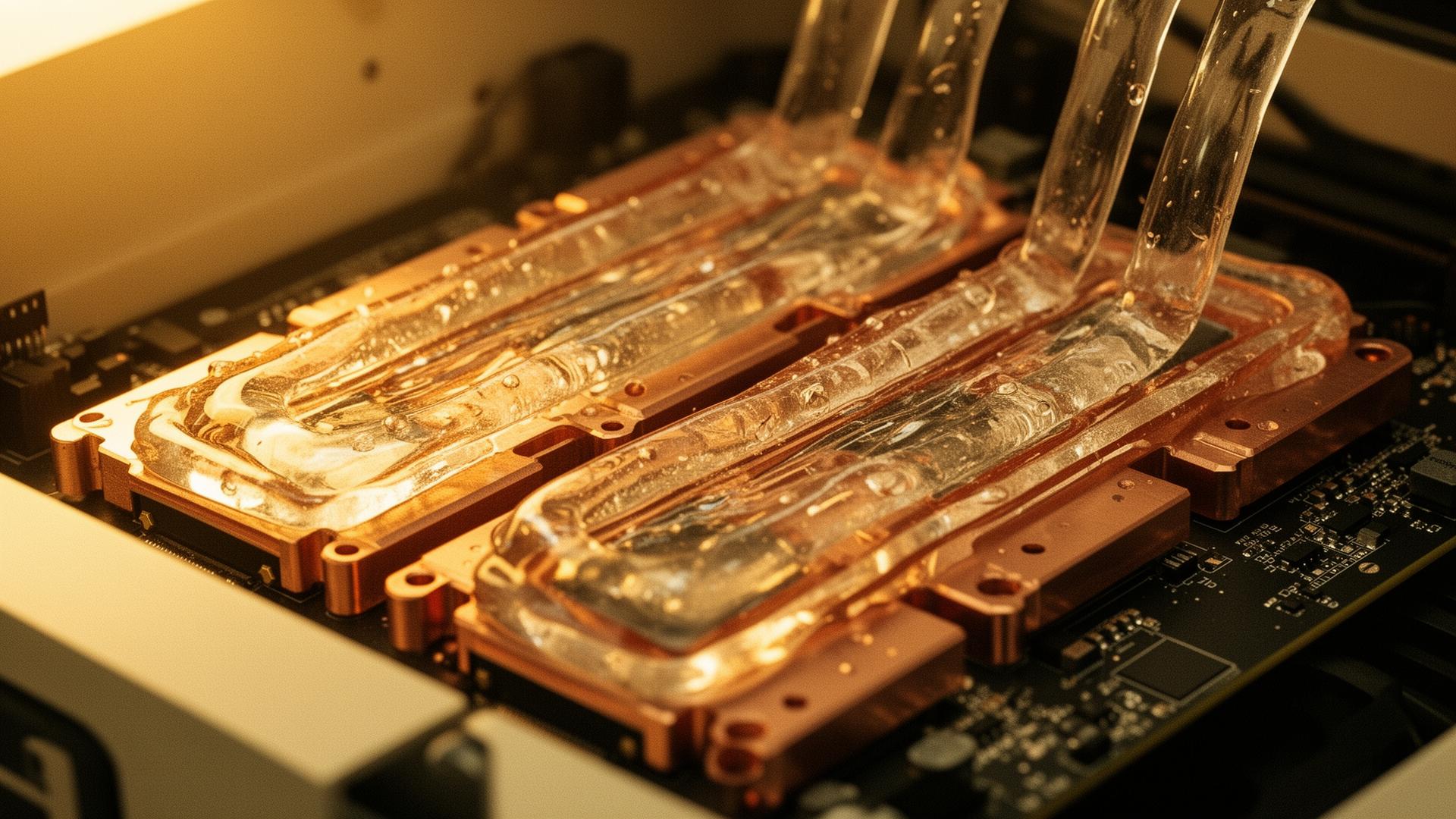

Fig. — In situ

Capabilities

- 01 · Site & power strategyGrid-adjacent terrain, behind-the-meter generation, multi-source electrical topology.

- 02 · Mechanical systemsLiquid-to-chip cold plates, rear-door HX, two-phase immersion options where density demands.

- 03 · Network fabricNon-blocking fat-tree, rail-optimized GPU placement, optical interconnect throughout.

- 04 · Resilience designConcurrent maintainability and fault-tolerant topology without overbuilding redundancy.

- 05 · SustainabilityHeat reclaim, water-positive design where possible, grid-aware load shifting.

— Engage the practice

Audit your AI roadmap with us.

A small number of engagements per quarter. We work with sovereign funds, frontier labs and hyperscale operators on infrastructure that lasts.