The foundational layer of intelligence is physical.

Frontier AI does not run on the cloud you know. It runs on coordinated thermodynamics. This is our reading of the next decade of infrastructure.

- Compute era

- Post-hyperscale

- Density target

- 100–250kW/rack

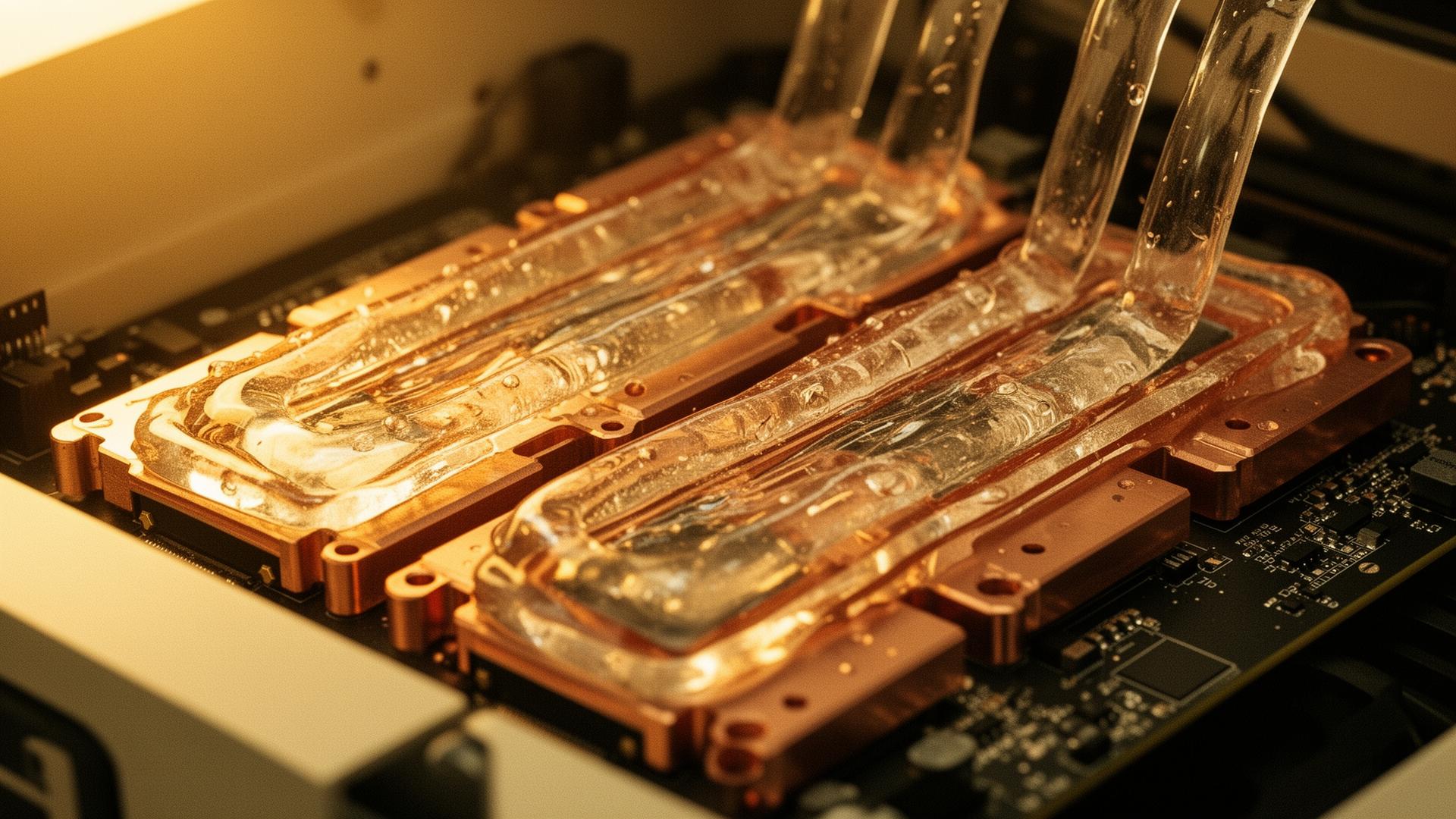

- Cooling

- Liquid-to-chip

- Network

- Optical fabric

From general compute to accelerated intelligence.

Enterprise data centers were optimized for CPU virtualization, modest power densities, and predictable load. Frontier AI breaks every one of those assumptions. Training a single foundation model now demands tens of thousands of accelerators, operating as one logical machine, drawing more energy than a small city.

The result is a re-architecture from first principles. Cooling moves from air to fluid. Power moves from grid-following to grid-forming. Network moves from packet-switched commodity Ethernet to non-blocking optical fabric. Real estate moves from cheap land to grid-adjacent strategic terrain.

Infrastructure is no longer a cost center. It is the moat.

Four constraints that define the design.

- PowerPredictable, dense, grid-forming. AI loads do not tolerate brownouts.

- ThermalHeat is the new wall. Liquid is the only path forward at frontier density.

- LatencyTightly-coupled training demands sub-microsecond fabric. Distance is friction.

- CoordinationPower, cooling, network and compute must be governed as one system.

The four constraints, photographed.

From cold-plate to substation. Each constraint translates into a physical artifact the team must reason about.

The grid is the new substrate.

AI training drives multi-hundred-megawatt continuous loads with non-linear ramps. The infrastructure of intelligence is, increasingly, an energy infrastructure problem disguised as a computer.

Audit your AI roadmap with us.

A small number of engagements per quarter. We work with sovereign funds, frontier labs and hyperscale operators on infrastructure that lasts.