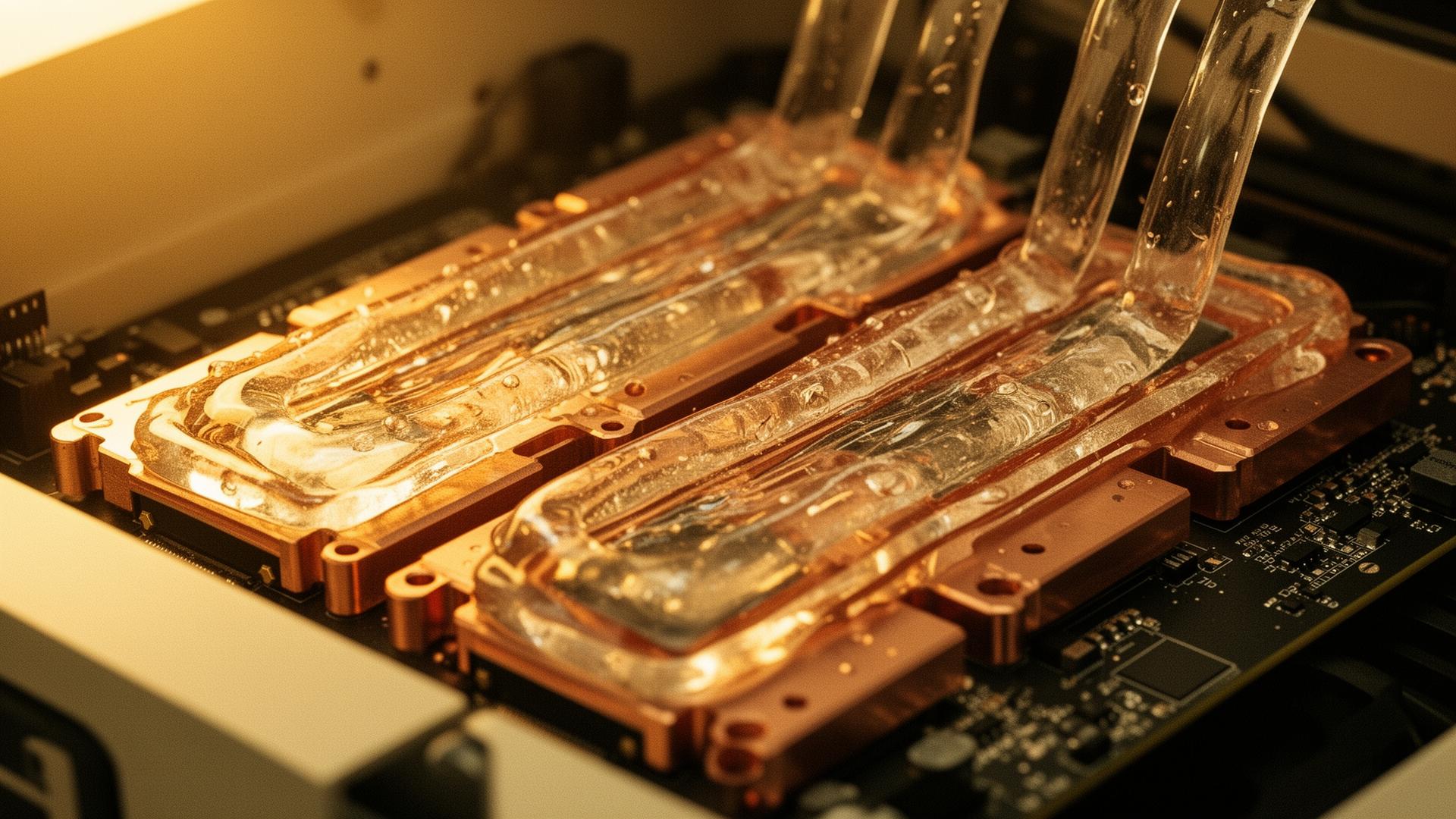

Thermal envelope collapse

Air-cooled facilities top out around 30kW/rack. Frontier AI training nodes are now specified at 120–250kW. The gap is not incremental — it requires a different physical paradigm.

- Liquid-to-chip cold plates

- Rear-door heat exchangers

- Two-phase immersion options